EyeQube Engine is the next generation decision engine. Loan application evaluation, limit determination, etc. You can design your processes, which include decision steps in various financial fields, with this solution and run them quickly and safely.

With its user-friendly interface, users can effortlessly create and manage data flow and elements thanks to the "drag and drop" feature in the interface.

By having rich components (scorecard, script, decision tree, segmentation, analytical model import, etc.), every desired scenario in decision flows can be realized.

Each change is versioned and passed through the approval process, both in compliance with the protection of personal data and with the maker/checker structure.

Since it runs on Kubernetes, it provides high scalability to increase application performance and efficiency.

Since it has a flexible structure, it is very easy to manage the data dictionary. It does not require software changes.

Creating flows with a drag-and-drop structure is very easy. Many flows can be designed using 10+ components.

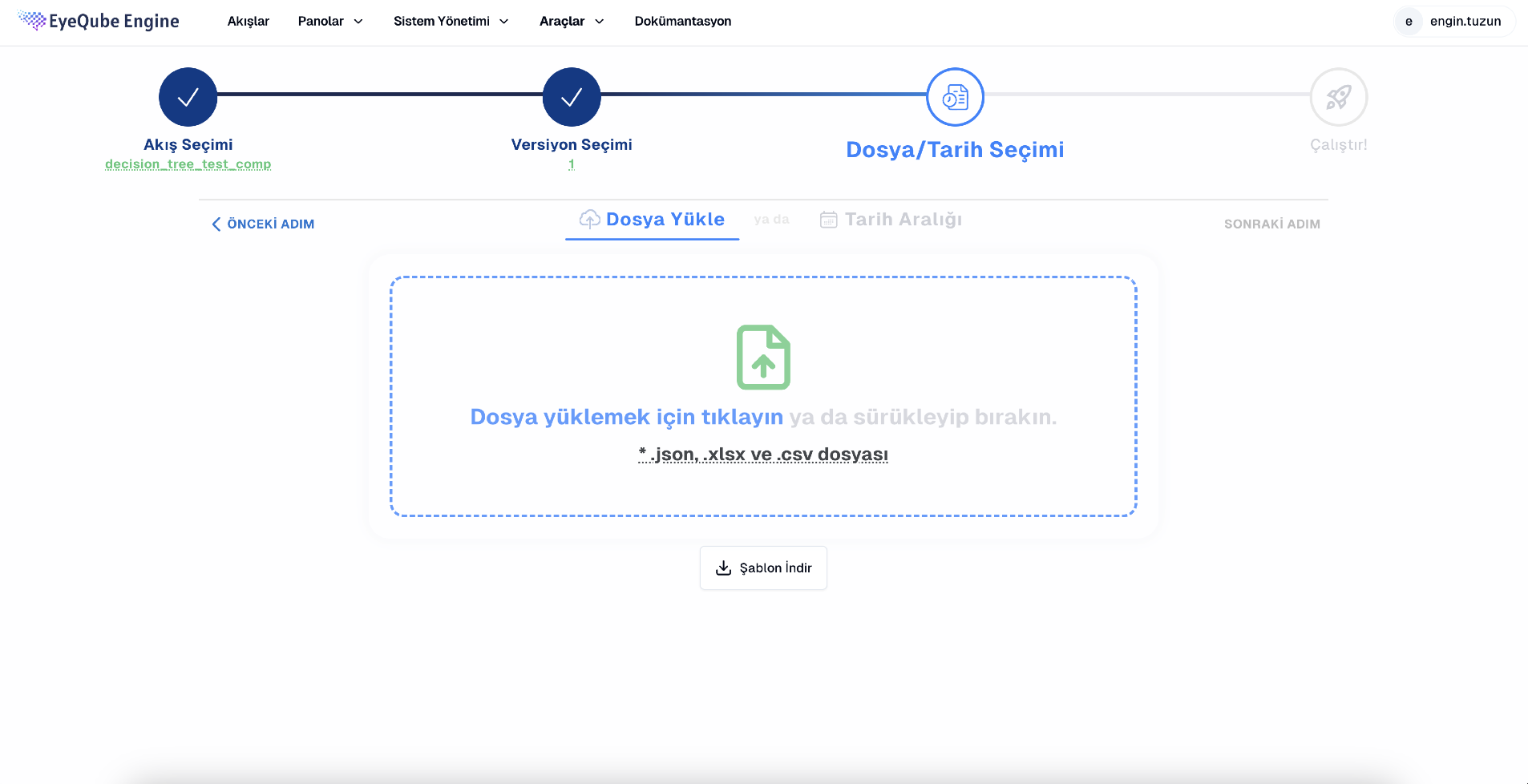

Flows can be tested with synthetic data before going live, and results can be viewed.

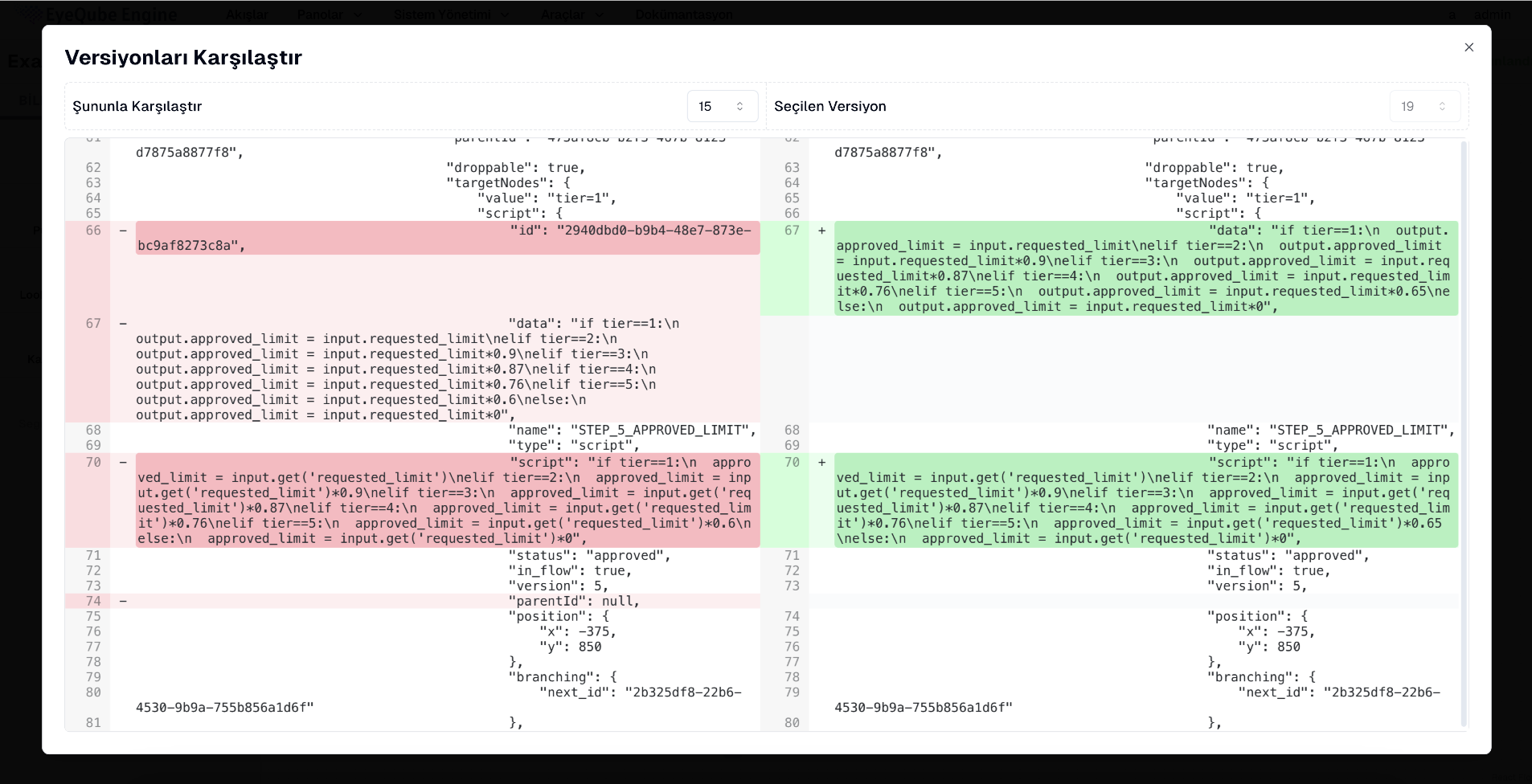

Every change is recorded and versioned. At any time, it is possible to see who made which change and when.

VIP or blacklists can be created and used for control purposes in flows.

A secure and regulatory-compliant mechanism is provided for changes in flows and production, ensuring both approval and control.

Changes can be applied either directly from the application or through export/import rules, allowing for seamless deployment.

The EyeQube Engine interface supports multiple languages, allowing users to choose their preferred language.

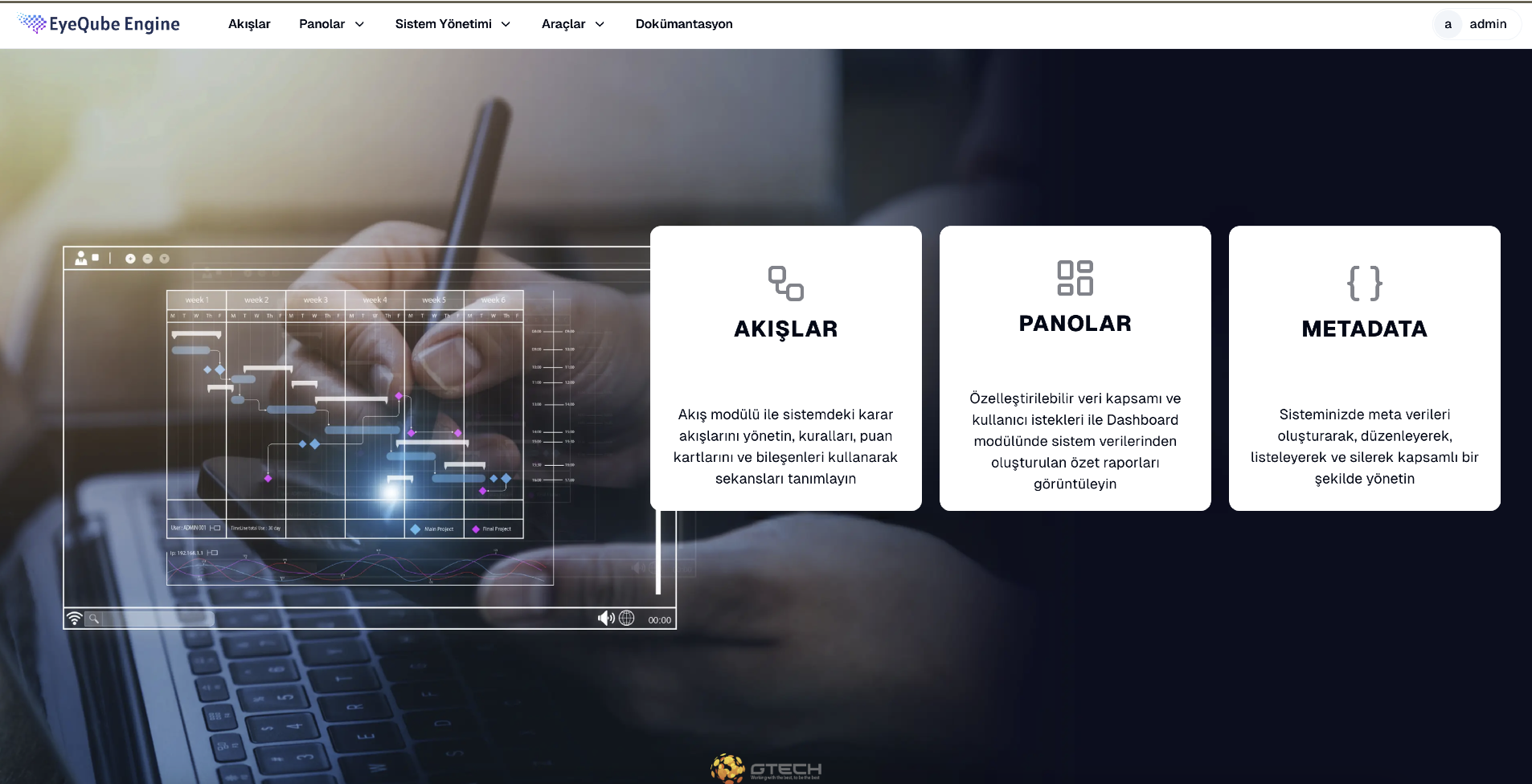

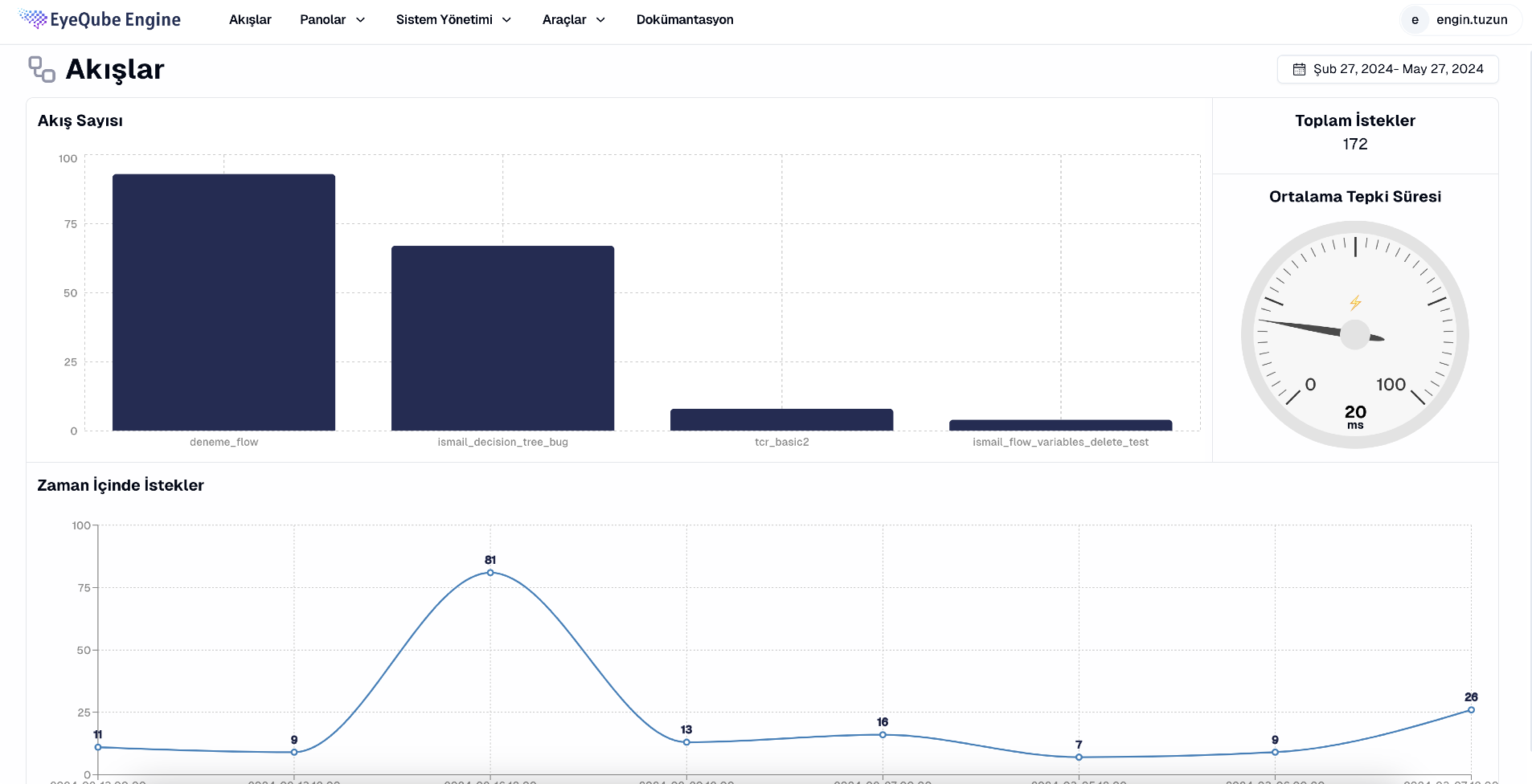

Flows, Dashboards and Metadata pages can be accessed.

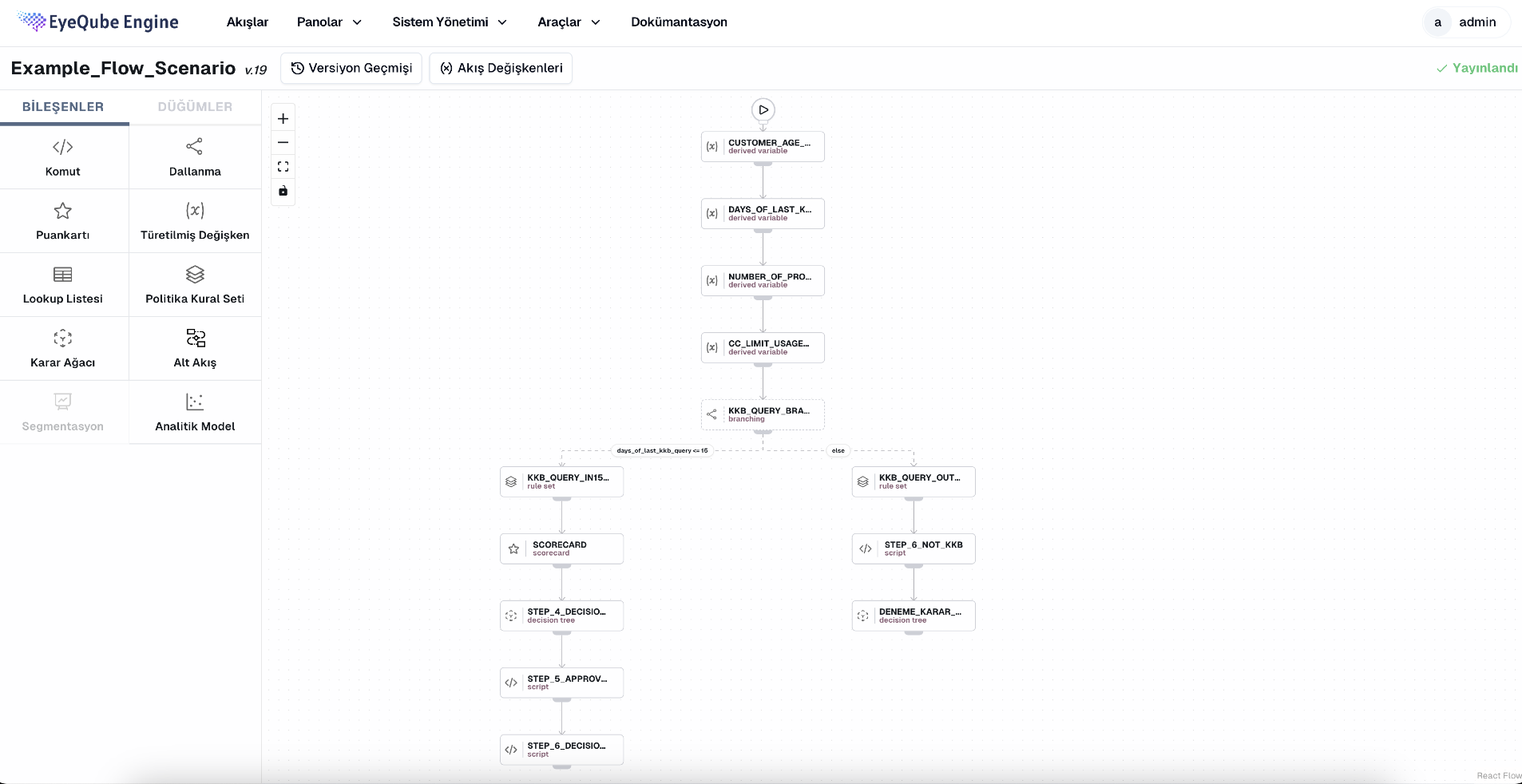

Flows can be built and edited in the built in Designer Page.

You can download the file that suits your flow from here and simulate the data.

You can easily view the data based on your flows in various graphs.

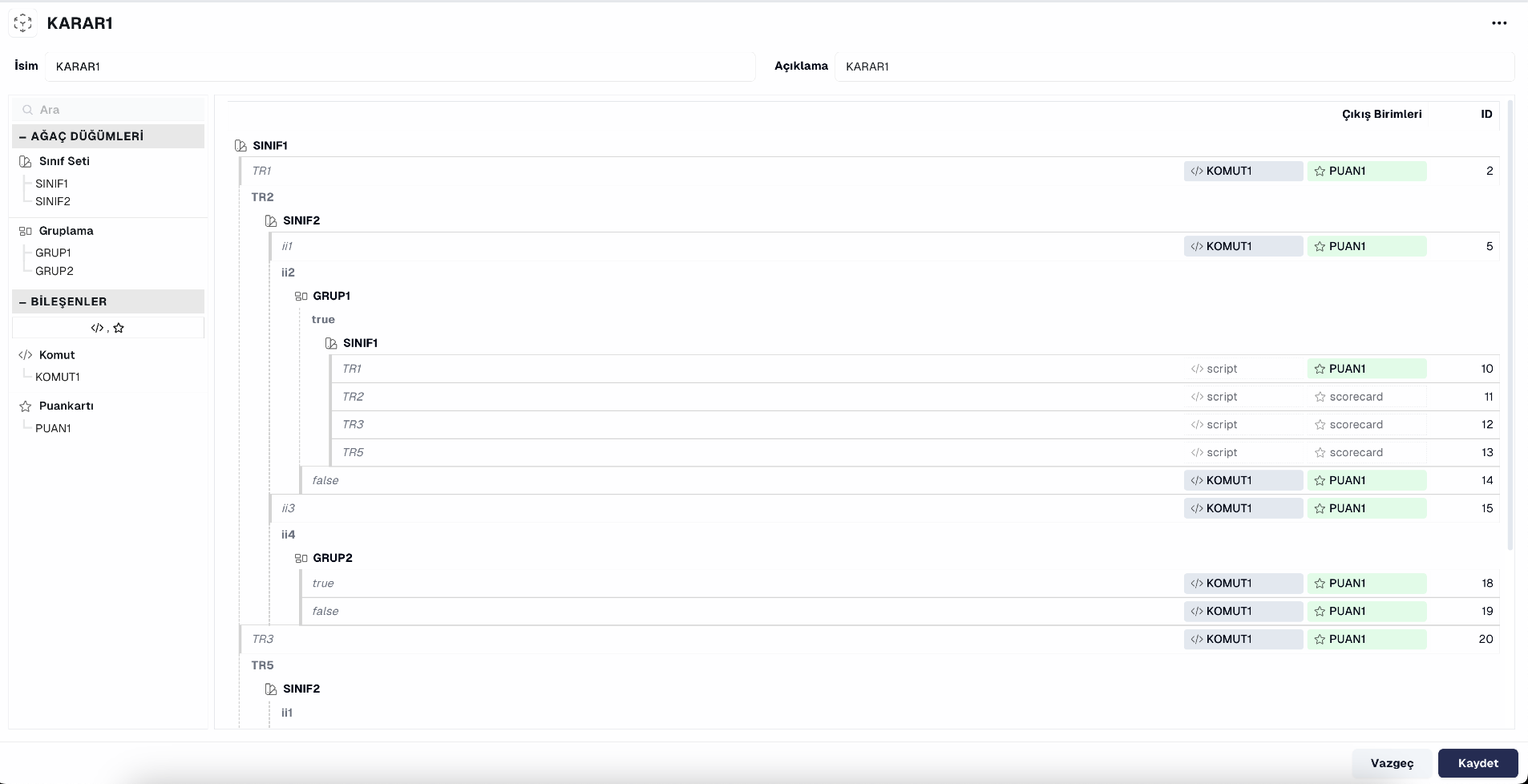

It allows you to create/manage any desired scenario in decision flows.

It allows you to compare different selected versions.

Since it has a flexible structure, it is very easy to manage the data dictionary. It does not require software changes.

Creating flows with a drag-and-drop structure is very easy. Many flows can be designed using 10+ components.

Flows can be tested with synthetic data before going live, and results can be viewed.

Every change is recorded and versioned. At any time, it is possible to see who made which change and when.

VIP or blacklists can be created and used for control purposes in flows.

A secure and regulatory-compliant mechanism is provided for changes in flows and production, ensuring both approval and control.

Changes can be applied either directly from the application or through export/import rules, allowing for seamless deployment.

Esnek yapısı sayesinde veri sözlüğünü yönetmek çok kolay. Yazılım değişikliğine ihtiyaç duymuyor.

Creating flows with a drag-and-drop structure is very easy. Many flows can be designed using 10+ components.

Flows can be tested with synthetic data before going live, and results can be viewed.

VIP or blacklists can be created and used for control purposes in flows.

Every change is recorded and versioned. At any time, it is possible to see who made which change and when.

A secure and regulatory-compliant mechanism is provided for changes in flows and production, ensuring both approval and control.

Changes can be applied either directly from the application or through export/import rules, allowing for seamless deployment.

Flows, Dashboards and Metadata pages can be accessed.

Flows can be edited here.

You can download the file that suits your flow from here and do your retro analysis.

You can easily view data about your flows.

It allows you to create/manage any desired scenario in decision flows.

It allows you to compare different selected versions.

Product Tour

Product Tour

Key Features

Use Cases

EyeQube Engine has streamlined our loan application evaluations. The drag-and-drop interface simplifies designing custom decision flows, cutting down manual processing time. Retro analysis lets us test these flows with synthetic data, ensuring reliability before going live. This tool has drastically improved our efficiency and accuracy.

EyeQube Engine has streamlined our loan application evaluations. The drag-and-drop interface simplifies designing custom decision flows, cutting down manual processing time. Retro analysis lets us test these flows with synthetic data, ensuring reliability before going live. This tool has drastically improved our efficiency and accuracy.

EyeQube Engine has greatly improved our credit limit determination processes. The versioning feature ensures every change is documented and approved, providing a clear audit trail for compliance. Running on Kubernetes, the system scales effortlessly to meet our growing user base, enhancing overall performance and reliability.

With our expert team that you trust to add value to your data we anticipate the importance of data for your business and reflect our deep field experience to your business by using every layer, field, and meaning of the correct information.

We are here to produce specially designed solutions for your needs and to apply them carefully to your business.

EyeQube Engine is designed with the ability to make complex decisions based on business rules, data and machine learning technologies. Thanks to its user-friendly interface and flexible authorization management features, it can be customized to meet the needs of many businesses.

For example, it can be used in the process of setting an offer price for an insurance company, in the process of approving a loan application for a bank, in the process of customer segmentation for a retail company, and in many similar scenarios. Decisions are real-time and reliable. Thus, enterprises increase productivity by making fast and accurate decisions accompanied by EyeQube Engine.

Users can create and manage data flow and items effortlessly thanks to the "drag and drop" feature available in the interface. This centralizes everything needed for data analytics and processing, so that time and energy can be used more efficiently.

It makes it possible to manage authority and assign roles between users; application usage and management take on a fast and effective role. A single task can be assigned to a user. It works in harmony with your user management system with LDAP integration.

It has a flexible REST API structure that allows it to be easily integrated with other systems. In this way, users can use the data and features offered by the application on their own systems or share them with other systems.

Users can create versions where they can track changes and additions they have made to the project; restore the current version or compare it with a different version. In this way, the progress of projects can be monitored.

Thanks to the fact that it runs on Kubernetes, it provides high scalability to improve application performance and efficiency. In this way, it can respond to future demand increases or other needs.

It allows users to view incoming requests, flow, item and result-based reports, and application logs.

With this feature, users can instantly monitor the performance and requests in the system and make the necessary interventions. It allows logging and displaying of transactions in the system.

It provides many options for authorization and management of users. Users can log in to the system by using LDAP or database authentication methods; also if they forget their passwords, they can renew their password by using the "Forgot Password" feature.

Thanks to role-based authorization, the pages and authorizations that users can access can be determined and the transactions made are logged and displayed.

Defines and manages the data processing and analysis process. It allows users to create, edit, filter, versioning and restore data streams.

Using "Call" based logic, it has a structure that defines and manages every step of the data processing and analysis process. A user-friendly interface is provided for listing, filtering and editing streams. Thanks to the versioning feature, users can restore old versions or compare existing versions. The streams also support the Maker/Checker structure and provide ease of use with the Drag&Drop front-end.

It enables users to perform their own transactions and create their own streams. For each item, the operation is performed with the code or rules created by the users. Versioning, copying and restore features are also available. In this way, users can perform their own transactions more quickly and effectively. The features include Script, Split, Scorecard, Lookup, Policy Rules, Decider Tree, Segmentation and Derivative Variable items.

It supports the Python language and allows users to write and run code. With the Intelisense feature, errors are prevented while writing code and the accuracy of the code is checked. Validation checking and using Python functions are also available. The script element allows users to check and return their codes, along with the ability to call Lookup methods and versioning features.

Users can sort their data according to certain criteria, select variables, and write under certain conditions.

It allows users to make scores by selecting variables and typing conditions. Thanks to the versioning feature, it offers the possibility to view and restore old scoring transactions.

Users can upload data from the file, access data from pre-created lists, create as many lists as they want, and access data quickly.

It is a feature where users can add rules on a script basis. With this feature, users can write the rules they will apply to the data from the front end, view the multiplication and explanation outputs of the rule, and easily check the accuracy and effects of the rules in the data analytics process.

Used for decision-making processes. Users can create a decision tree by selecting variables and making breakdowns from the front-end; they can assign a class or stream to another item. It is designed to speed up users' decision-making processes by using data analytical results.

It allows the user to select variables in the data set and perform clustering analytical modeling. The user can upload the training data and view the model results. In this way, different groups or segments in the data set can be discovered and analyzed.

It allows users to derive variables using the Python language, facilitate code writing with intellisense and validation support, and process data using Lookup methods and Python functions.

It provides users with the opportunity to review data analytics and results retrospectively. The performance and impact of transactions performed in the past can be evaluated.

Users can observe the results of past decision trees and segmentations, which is useful for making better decisions and improving data analytics processes. It allows users to understand the reasons for past transactions and make future transactions more effective.

It offers the possibility to make hot deployment of streams on a version-based basis. Thus, users can see and test the changes they have made immediately in the application.

It allows users to learn more about their data sets. This feature is used to display the sources, sizes, fields, and other important information of data sets.

In addition, user-generated tags and descriptions for data sets can also be displayed. This feature is used to make data management and the analytical process more effective.

To provide the best experiences, we use technologies like cookies to store and/or access device information. Consenting to these technologies will allow us to process data such as browsing behavior or unique IDs on this site. Not consenting or withdrawing consent, may adversely affect certain features and functions. Cookie Policy